FEA predicted failure: 8,000 psi Actual burst test: 5,700 psi

Sometimes the opposite happens - tests outperform the simulation. Either way, the mismatch raises an important question: how much can we rely on simulation alone?

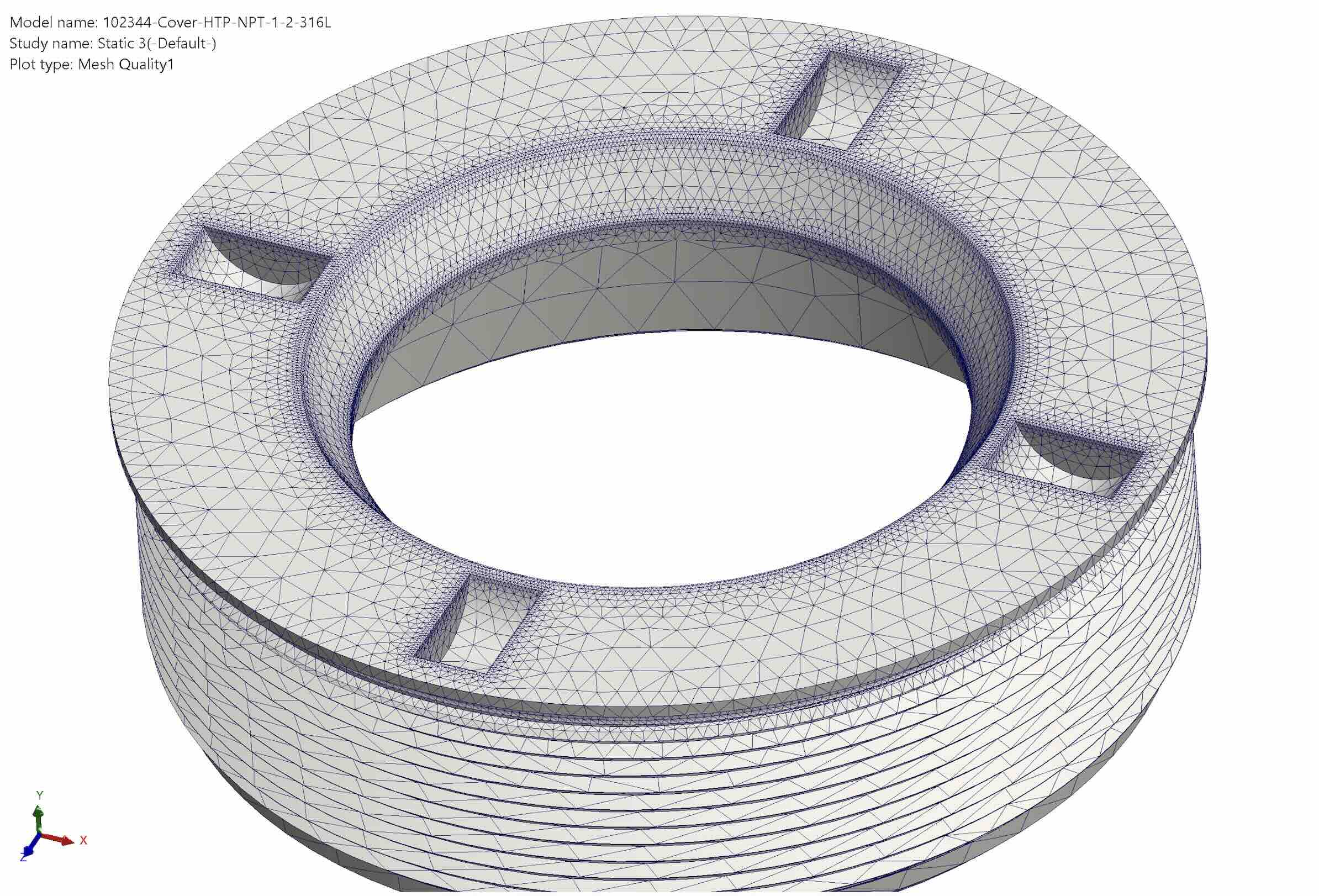

Physical testing is expensive and time consuming. Test rigs must be built, parts manufactured and instrumentation installed. Each design revision restarts the cycle. Because of this cost, Finite Element Analysis (FEA) plays a central role in modern engineering workflows, if it can provide reliable results validating reality.

Computer-aided design (CAD) has achieved that level of reliability. What you see is what you get. With FEA, however, what you see in the simulation is not always how the part behaves in the real world. Mechanical components are influenced by factors that traditional simulation tools struggle to capture:

These details often determine the difference between a safe design and a failure point. FEA cannot accommodate for these. What does accommodate for these nuances is experience.

Is AI just a glorified autocomplete? Not really, although year 2026 is starting to feel like the year of AI frenzy. We can revisit historical The Bubble Chronicles from a number of sources, all having one common denominator - emotional decision making. From tulips to dot-com, you understand, humans are emotional creatures.

Well, 2026 is upon us, AI is here, how about a practical use case: simulations based on prior experience. We see trends where AI-assisted simulations are beginning to emerge. Companies like PhysicsX are developing systems that combine physics simulation with machine learning. These types of AI models do not require massive worldwide data centers. Perhaps research university servers, or large engineering company servers can be augmented to accommodate the load.

Instead of relying solely on theoretical models, AI can learn from real engineering history such as past test results, previous simulations, and manufacturing instructions. The system begins to identify patterns between what simulations predict and what actually happens in testing. These AI models are non-opinionated and reality-bound, with one goal in mind: FEA results predict actual product performance.

Rather than replacing FEA, AI augments it by helping engineers understand where and why simulations diverge from reality.

Many engineering organizations possess decades of valuable experimental data, yet much of it is tribal knowledge unavailable between inter-company silos. Test reports, lab notebooks, and old simulation files are not readily shared and base-lined for future designs.

AI platforms aim to change that by converting scattered engineering records into a continuous learning system. For example, a platform like PhysicsX can integrate:

The result is a living engineering model that improves with every simulation and product build.

The year 2026 marks a broader shift in engineering culture. The digital world has moved from an attention economy, where visibility gets quick likes, and drives value, toward a merit economy, where experience builds trust, and results drive value.

Engineering is no exception. Data alone is not enough - experience captured in data becomes the real asset. Companies that successfully transform historical test results into usable knowledge will gain a major advantage.

Another cue from another industry is Lilli, developed by McKinsey. This is an internal generative-AI assistant that searches and synthesizes McKinsey's entire knowledge base - more than 100 years of reports, research, and internal documents. Instead of reinventing analysis every project, consultants can:

Other consulting firms are developing their own generative-AI agents turning their businesses from a labor-intensive research business into an AI-augmented knowledge platform, reducing junior work, accelerating projects. Value is shifting toward strategic insight and technology implementation.

Prediction: design review meetings soon will start with a question: "Have you asked Lilli?" rather than delving into the PowerPoint deck off the bat. Danger is - if AI models are trained on obsolete or outdated information. In engineering this is less of a risk than with consulting because physics do not change. Obsolescence of a product does not invalidate the engineering work that went into its development. That data is still valid as a baseline.

At Encole, we routinely correlate FEA predictions with actual burst test results. The two rarely match perfectly. Our baseline FEA setup typically includes the following:

After several iterations of both design and simulation setup, the calculated minimum factor of safety often falls short of the desired 2.5 safety margin - despite physical tests demonstrating acceptable performance.

This gap between simulation and reality highlights a limitation of traditional analysis.

During hydrostatic pressure testing, Encole sight glasses are regularly subjected to pressures exceeding 10,000 psi without failure.

For brittle crystalline materials such as sapphire or other optical materials, engineers often design with a factor of safety (FOS) of around 2.5 for typical industrial applications.

In mission-critical systems where failure is measured in lost missions or lost lives, factor of safety rises to 7 or higher. Higher safety margins, however, come with a cost. Greater safety factors mean larger, heavier, and more expensive components. This is why accurate prediction matters.

For now, the engineering process remains clear: simulation and testing must work together. FEA provides insight and accelerates design exploration. Physical testing is used for validation. Both are essential during engineering due diligence.

At Encole, we continue to rely on rigorous testing while exploring new tools bridging the gap between simulation and real-world performance. AI-augmented engineering may be the next step toward aligning what we simulate with what actually happens. For the time being, we largely rely on physical testing.